April, 1988: Victor Herbert Goes Rogue

The inventor Paul LeRoy (28 at this time and, therefore, currently unaware of his own death), met up with our percussionist Schiing the other day. They discussed, amongst other things, the incident that shook the time-travelling community last month (or, last month thirty-five years ago, to be precise), when a young and out-of-time Victor Herbert somehow managed to delay David Mamet’s play Speed-the-Plow.

It was rescheduled from April 13 to May 3, 1988, and it caused all kinds of havoc in the time-space continuum. Safe to say, Herbert is not a popular man at this particular moment in time. Everyone involved in the business knows that if you reschedule a Mamet play by a certain amount of days, all his other plays will be rescheduled accordingly. This interferes with the actors’ schedules, and, because these are often big names involved in a variety of projects, important movies and plays will be moved or cancelled.

Indeed, Mamet wrote Our American Cousin under his Tom Taylor pseudonym in 1857, and as a consequence this episode reintroduced the 1865 Abraham Lincoln assassination to history. This hasn’t occurred since the George VI tea incident.

One can only speculate what was on Herbert’s mind, but it is well known that since learning about his fate and reputation post-Eileen (1917) when he was forced to compose in a simpler style in a misguided attempt to pander to newer musical sensibilities, he has become a bitter man, and many believe that he was also responsible for the recent HarperCollins Bridgerton misprint scandal.

LeRoy and Schiing also discussed the impact of fake Remo conga skins in the broader context of pop and easy listening, and how this might have affected the popularity of Peter Allen’s classic live version of I Go to Rio across the different time rifts.

We hope to bring you a YouTube video of the entire discussion soon.

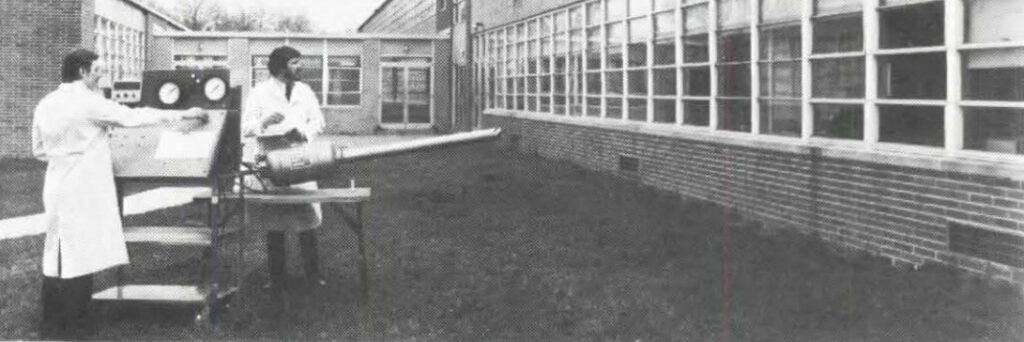

It’s been a few years since our last post. You may remember Paul LeRoy (arrow) from our

It’s been a few years since our last post. You may remember Paul LeRoy (arrow) from our  Knob manufacturers Arton and Leonard Brother have negotiated a deal with Simon Cowell for a new television show.

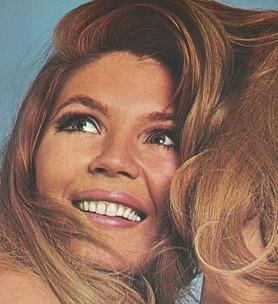

Knob manufacturers Arton and Leonard Brother have negotiated a deal with Simon Cowell for a new television show. Pop darling Shaoncé has revealed details about her new album scheduled for release in early 2012. After the tremendous success of her previous album, Shock, people are curious to see if she can live up to the hype — but judging by yesterday’s press conference in Paris there is no need for concern. Sporting an outfit that would make Lady Gaga proud, the young singer won the world press over with her sharp wit and acute observations. Her warm, conversational style created a unique and friendly atmosphere, and even old codgers like myself had to let down our professional guard a bit to enjoy the exhilarating intelligence and humanity of the encounter.

Pop darling Shaoncé has revealed details about her new album scheduled for release in early 2012. After the tremendous success of her previous album, Shock, people are curious to see if she can live up to the hype — but judging by yesterday’s press conference in Paris there is no need for concern. Sporting an outfit that would make Lady Gaga proud, the young singer won the world press over with her sharp wit and acute observations. Her warm, conversational style created a unique and friendly atmosphere, and even old codgers like myself had to let down our professional guard a bit to enjoy the exhilarating intelligence and humanity of the encounter. As a self-proclaimed inventor focusing on time/space issues, Paul LeRoy (36) has a dubious record. Following his amazing discovery that it is possible to move chromium through time, further research has provided little insight — and it has indeed been claimed that the spectacle surrounding his Cr24 AnaChrom experiments was never anything but a carefully considered marketing plan masterminded by his sponsors, and that the entire project was a hoax. When LeRoy’s book “Time As I See It” was released three years ago, he was written off as a hack and a madman by peers in the National Society of Inventors.

As a self-proclaimed inventor focusing on time/space issues, Paul LeRoy (36) has a dubious record. Following his amazing discovery that it is possible to move chromium through time, further research has provided little insight — and it has indeed been claimed that the spectacle surrounding his Cr24 AnaChrom experiments was never anything but a carefully considered marketing plan masterminded by his sponsors, and that the entire project was a hoax. When LeRoy’s book “Time As I See It” was released three years ago, he was written off as a hack and a madman by peers in the National Society of Inventors.